When I first heard the story of the Potemkin village, it fascinated me. Picture this: it was 1787, and Prince Grigory Potemkin wanted to impress his lover, Empress Catherine the Great, during her journey through Crimea. He wanted to show her that the newly conquered region was thriving and prosperous. Since he couldn't build real thriving towns overnight, he built fakes—temporary, charming facades of villages—and moved happy, well-dressed people between stops to keep the illusion going.

A beautiful, fake facade built just to impress—it’s a perfect description of a growing problem in technology today: the rise of Potemkin AI.

We are constantly shown brilliant, automated systems, but honestly, they are often just the charming front end. Behind the scenes is a huge, invisible network of human labor. This practice is more than just a clever marketing trick; it reveals a major ethical and economic flaw where the perceived value of high-tech advancement is built, paradoxically, on invisible human effort.

The financial pressure cooker

Why do companies do this? Why build these beautiful facades? For me, the answer is always money: the current AI investment bubble.

It's unbelievable how much power the simple buzzword "AI" has. If a company calls its product "Artificial Intelligence," it gets higher investment and better valuations than if it honestly calls it a "human-assisted review service." This creates huge pressure—not only from investors demanding an AI strategy but also from CEOs trying to keep up due to pure fear of missing out (FOMO).

This intense pressure forces companies to exaggerate what their machine-learning systems can actually do. The reality is tough:

Recent studies show that companies severely overestimated AI’s ability to take over complex, full-time roles.

Reports indicate that for every $1 of savings sought through AI-related firings, companies are actually spending $1.27. Laying off staff to implement AI is surprisingly expensive in the short term.

MIT found that a staggering 95% of organizations have yet to see a financial return on their AI investments.

When genuine automation fails, the Potemkin solution kicks in: a clean, automated-looking user interface simply funnels data to a vast, often manual, back-office operation. The real function of the technology shifts from solving the problem to simply capturing the data needed for a low-wage human workforce to solve it later.

Amazon’s just walk out: a case study

To see the Potemkin AI in action, we can look at the former Amazon “Just Walk Out” (JWO) technology.

The promise was simple: computer vision would remove checkout lines, letting customers grab items and go. But the operation was far from seamless. Reports revealed a manual, massive operation where over 1,000 human reviewers in India were reportedly tasked with watching video footage to verify and correct transactions. Sometimes, these human workers accounted for up to 70% of the purchases.

The automated facade was just the customer-facing endpoint. At its core, JWO was a human-first operation with an "AI" brand wrapper slapped on.

The ethical crisis of the ghost worker

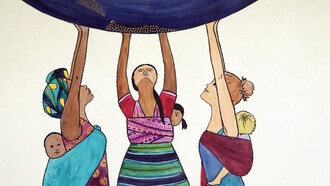

This is where the story gets difficult and, frankly, heartbreaking. The biggest concern is the ethical crisis facing these ghost workers. These people, often in the Global South, are the absolutely invisible foundation of the systems we call “intelligent.” They do the critical, yet tedious, repetitive, and often emotionally traumatic work, and we never even see their names.

This isn't easy work. They face poverty wages and extreme mental health challenges due to constant exposure to traumatic and toxic content, yet they are systematically excluded from discussions about technology ethics and labor rights.

The organization of groups like the Kenyan Data Labelers Association is a vital response. They are directly challenging the exploitative “gig economy” model and demanding that ethical AI must start with fair treatment and visibility for this essential labor force.

Moving beyond the façade

For me, this is what matters most. Ultimately, Potemkin AI risks destroying our public trust. It gives us a totally wrong idea of what machines can actually accomplish. It distracts from real technological progress by celebrating systems that are, to be honest, elaborate, human-powered Rube Goldberg machines.

And there’s another danger we rarely talk about: Potemkin AI doesn’t just distort our understanding of technology—it distorts policy. Governments, regulators, and corporations make decisions based on the illusion of fully automated systems, pouring money into tools that are far less capable than advertised. This widens the gap between expectation and reality, accelerating a cycle of disappointment, distrust, and overcorrection. If leaders keep believing the façade, they will design policies that ignore the very real people behind the curtain. Real progress requires honesty—about limitations, about labor, and about what AI truly is today, not what companies wish it were.

We need to look closer and demand transparency. We must acknowledge the true human work that makes these systems function and ensure those who build the foundation of the AI revolution are treated with the fairness and protection they deserve.

Ethical AI cannot be built on invisible labor. Transparency is not just a moral; it's the only foundation for true progress.