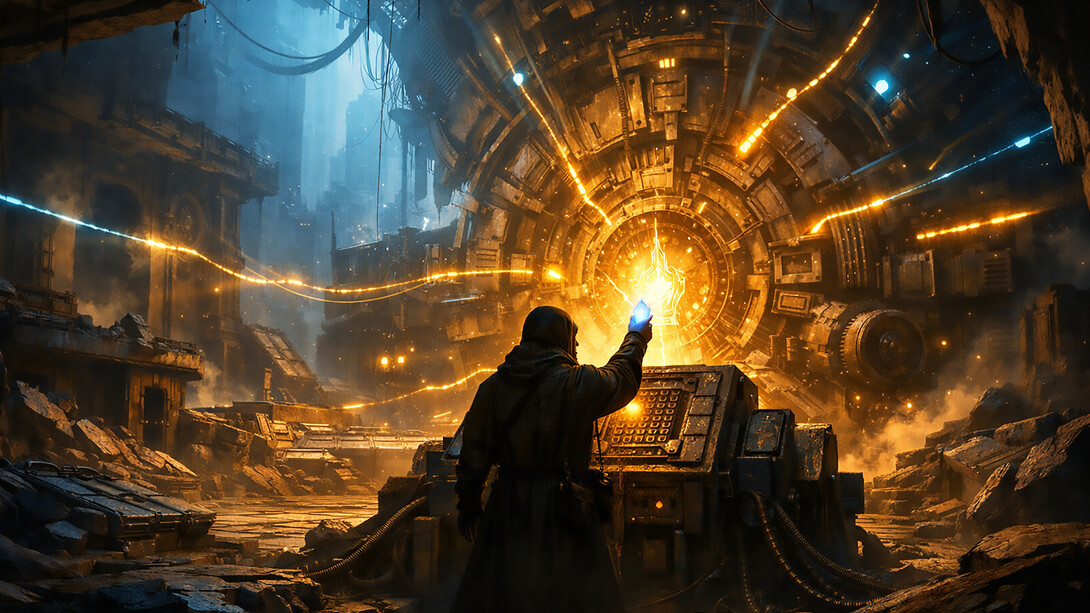

There is a certain type of scene that appears again and again in speculative fiction.

A derelict machine, vast and ancient, lies dormant beneath the ruins of a civilization.

The architecture still stands. The corridors are intact. The consoles respond.

But nothing truly moves.

Then someone places a small, crystalline artifact—a jewel, a key, or a fragment of the original source—into a hidden slot at the core of the engine. For a moment, nothing happens. Then the structure begins to respond: light spreading along forgotten circuits, dormant systems aligning, the whole apparatus revealing that it was never just a monument but an unfinished process.

In Miyazaki’s “Laputa: Castle in the Sky,” this jewel-like element is the access point to a lost technological order. But it could just as well stand for something less visible and more urgent: epistemic capital—the missing core that turns a formally functional civilization into a viable one.

When the engine runs without a core

Contemporary societies are full of functioning subsystems: financial markets, educational institutions, media infrastructures, digital platforms, and legal frameworks. On paper, everything is there: natural, human, social, and institutional capital. The architecture of a highly developed order is present.

And yet the engine misfires.

Environmental stability erodes despite decades of available data.

Human potential is compressed into credential cycles and productivity metrics.

Social fabrics fray even as connectivity explodes.

Institutions oscillate between paralysis and hyperactivity, reacting more to moods than to models.

What is missing is not information. Data are abundant. Models exist. Expertise is not in short supply.

What is missing is the capacity to integrate information into coherent orientation under complexity—the ability to distinguish the signal from the noise and to align action with structural understanding rather than with tactical incentives.

That is what I call epistemic capital: the architecture of valid knowing, not the mere accumulation of facts.

Epistemic capital is the jewel in the engine: small compared to the machinery, yet decisive for whether a civilization operates as a living process or as an impressive, self-consuming apparatus.

Beyond knowledge: what epistemic capital actually is

The phrase “knowledge economy” suggests that the central resource of modern societies is knowledge. But what currently expands is not knowledge; it is symbolic redundancy: more papers, more content, more dashboards, more opinions, and more notifications.

Epistemic capital is something different.

It is the systemic capacity to:

Filter information according to structural relevance, not just emotional salience.

Maintain coherence of models across domains—ecology, economy, technology, and culture.

Expose assumptions instead of hiding them behind institutional decorum or technical jargon.

Hold open nonlinear futures, rather than collapsing them into comforting narratives.

Design institutions and tools—including AI—as enabling structures for judgment, not as substitutes for it.

In other words, epistemic capital is the organized ability of a society to stay in contact with reality while it becomes more complex than any single observer can grasp.

When this core is absent, all other forms of capital—natural, human, social, institutional, and technological—remain formally present, yet are systematically misaligned. The machine runs, but not for anything beyond its own inertia.

AI as an amplifier without architecture

The arrival of powerful AI systems makes this absence brutally visible.

AI can compress redundancy, reveal patterns, and simulate alternatives at a scale that no human bureaucracy could ever handle. But in a context where epistemic capital is weak, these capabilities do not automatically lead to better decisions. They simply accelerate whatever regime of sense-making is already dominant.

If public discourse rewards outrage, AI will optimize outrage.

If institutions reward short-term performance indicators, AI will perfect tactical exploitation of those indicators.

If strategic blind spots are politically convenient, AI will help decorate them with plausible stories.

From the outside, this looks like progress: more dashboards, predictive models, and decision-support tools. From the inside, it can amount to a high-speed hallucination, with the same old biases and omissions now automated and scaled.

The crucial point is this:

AI is not the jewel in the engine.

AI is part of the engine.

It becomes truly civilizationally relevant only when inserted into an epistemic architecture that can ask, "Which questions are worth asking?" Which models deserve trust? Which forms of complexity must not be flattened for the sake of convenience?

Without this architecture, AI remains a magnificent instrument in search of a framework—a power source feeding a machine that has forgotten its purpose.

Working on the jewel while the walls crack

From the perspective of tactical politics or management, the rational move is often to adapt: to play the game as it is set up, to optimize within the dominant metrics, and to participate in the endless ring of symbolic skirmishes.

Building epistemic capital looks, by comparison, almost irresponsible. It does not produce quick wins. It is hard to explain in formats designed for slogans. And it inevitably reveals structural contradictions many actors prefer to keep latent.

The temptation is obvious: If the world trades in narratives and visibility, why not offer better narratives and sharper visibility and leave the deep architecture for later?

The answer is simple and uncomfortable: because by the time “later” arrives, much of what could have been saved or transformed will already be gone.

The work on epistemic capital is, almost by definition, asynchronous with the dominant time regime. It proceeds while attention is absorbed elsewhere; it continues when funding dries up; it refines concepts even when the surrounding discourse prefers identities and camps.

It is similar to the patient restoration of the jewel in the engine—knowing that the structure above may have to go through several cycles of decay and partial collapse before someone finally remembers that the core exists and is needed.

This is not pessimism. It is a different stance toward time: not the timeline of election cycles, market quarters, or media trends, but what might be called vertical time—the time it takes for a civilization to become aware of its own conditions of viability.

Sapiopoiesis: designing for subject potentiality

In my broader framework of Sapiopoiesis, epistemic capital is not just a prestige resource for elites; it is the enabling condition for subject potentiality.

A civilization that systematically neglects epistemic capital trains its members to think tactically, not structurally. Subjects learn to:

Manage impressions instead of realities,

Treat complexity as a threat instead of a resource,

Outsource judgment to systems they do not understand.

A sapiopoietic civilization, by contrast, would treat epistemic capital as its primary investment:

Educational systems would focus less on content and more on orientation competence—the ability to move under uncertainty without collapsing into dogmatism or paralysis.

Institutions would be redesigned as epistemic interfaces—nodes where different domains, time horizons, and perspectives are brought into structured conversation, rather than into competition for attention.

AI would be explicitly framed as an infosomatic partner: a means to offload redundancy and free human attention for those irreducible decisions where responsibility cannot be automated.

In such a context, epistemic capital is not a luxury good. It is the minimum requirement for Sapiocracy—an order in which legitimacy is derived from the ability to sustain coherent, reality-attuned sense-making, not from mere power or performance theater.

The engine, the artifact, and the after

Every civilization story has a before, a during, and an after.

The “before” is the period in which the engine is built: institutions, infrastructures, symbols, and stories. The “during” is the long stretch in which the system functions, often long after it has lost contact with its original purpose. The “after” is usually narrated as collapse, regression, or replacement.

Epistemic capital offers another possibility:

an after in which the engine does not simply fail but is re-keyed—activated from a deeper layer, with a different understanding of what it means to be viable.

The work on that key is not glamorous. It rarely fits into panel discussions or strategy slides. It looks, from the outside, like someone polishing a small, unnecessary object while the building trembles.

And yet, if history and fiction share one lesson, it is this:

When the moment comes, entire architectures can depend on whether someone, somewhere, has taken the time to shape the artifact that still fits the core.

Epistemic capital is that artifact.

The question is not only whether societies will recognize it in time.

The question—for those who work on it—is whether the crafting continues even when recognition is absent.

The answer, at least for me, is settled:

The engine is already there.

The jewel is still being cut.