The traditional compact between higher education and the modern economy has fundamentally broken down. Schools are training students for a world that no longer exists, while actively penalising the skills needed for the world they are entering. The central challenge has always been the acceleration of students along the continuum of competence: moving them from abstract student knowledge to demonstrable professional impact. Artificial intelligence is both the catalyst behind this disruption and the only viable accelerator for achieving meaningful adaptation.

The shrinking value of rote knowledge

In the educational context, "competence" has traditionally been measured by the ability to retain and regurgitate information. However, the shelf life of this "Rote Knowledge" has expired.

The following illustrates how completely AI has conquered this domain:

Academic benchmarks: AI models now pass the Uniform Bar Exam in the 90th percentile and ace the US Medical Licensing Exam. If a machine can "know" the entire legal and medical canon instantly, human memorisation is no longer a competitive advantage.

The "knowledge debt" in schools: A 2025 report highlights that 85% of students now use AI to complete assignments, effectively offloading the "struggle" of learning. While this produces correct answers, it hollows out the foundational understanding (the "root" knowledge) that previously signalled student potential.

The workplace mirror: This creates a jarring disconnect. Schools continue to grade students on knowledge recall (facts, definitions, and formulas); routine skill application (solving standard problems, writing essays, coding basic syntax, and conducting experiments); and even basic critical thinking (analysing or summarising texts)—skills that the market has already automated. Consequently, we are seeing a 46% drop in traditional graduate roles in the tech sector year-over-year, as companies stop hiring humans to do work that software does for free.

This shift explains why the "broken compact" I introduced is so painful: graduates are selling a commodity (rote knowledge) that no one wants to buy anymore.

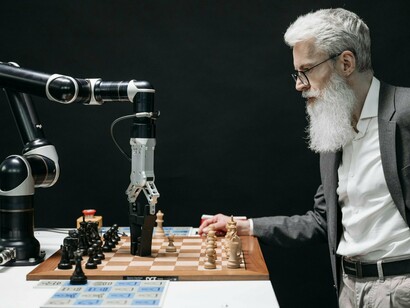

The rise of generative AI has permanently redefined the value of human labour. AI has commoditised routine cognitive tasks, increasing the market demand for unique, non-routine human competencies. Unlike previous waves of automation that primarily affected manual and routine blue-collar work, generative AI has a profound impact on white-collar cognitive tasks.

The toxic environment: the institutional failure in the age of AI

The rapid, disruptive emergence of generative AI has exposed a systemic institutional failure within education, creating a "shadow AI" environment characterised by a lack of guidance, inconsistent policy, and a destructive policing approach that harms students. This failure accelerates the obsolescence of traditional student credentials.

1. The institutional gap: unprepared staff and missing guidance

Despite the widespread adoption of AI tools by students and educators, institutions are failing to provide the necessary support, leading to informal and often irresponsible integration.

Lack of teacher preparedness and training: A significant majority of teachers feel unprepared to use AI for teaching or assessment. A 2025 survey highlights that 76% of teachers felt unprepared, while another report indicates that 68% of urban teachers have not received any kind of AI training. This gap between usage (83% of K-12 teachers used GenAI in 2023-2024) and institutional support means many schools are implementing AI poorly—or not at all—because staff lack the confidence, time, or resources.

Missing or unclear policies: Institutions largely lack the clear frameworks needed to govern AI use. In 2024–2025, over 60% of educators stated their districts had not made clear policies for AI use by teachers or students. Without specialised guidelines (on assignments, data privacy, and ethical deployment), decisions on AI usage often rely on individual teachers, resulting in inconsistent, institution-wide policy.

Widespread, but informal student use: Use of AI by students is pervasive, yet formal guidance is scarce. A 2025 survey found that 92% of students are now using AI in some form, with 88% having used it for assessments. However, only a minority receive formal guidance from their institution on its responsible use, leading to an ad hoc integration where AI is often used as a shortcut rather than a coherent instructional tool.

Resource and capacity constraints delay implementation: A crucial obstacle to meaningful adaptation is the lack of budget, infrastructure, and capacity. Especially in under-resourced contexts like rural or underfunded schools, professional development to support AI adoption is scarce. This lack of AI integration guidance and resources leads to institutional inertia and delays the systemic implementation necessary to prepare both learners and educators for an AI-driven future, risking a divided landscape where some are prepared and others are left behind.

2. Traditional assessments and curricula—built for pre‑AI contexts—make cheating (or shortcutting) easier

Traditional assessment practices, focused on measuring rote knowledge, are now easily compromised by generative AI, further undermining the credibility of degrees and setting up students for failure in the professional world.

Many standard assignments are now easily solvable with AI

The cognitive tasks measured by traditional assessment formats are now highly vulnerable because AI tools such as generative-AI chatbots can produce plausible answers quickly and accurately. These routine assignments include:

Essays and Multiple-Choice Questions (MCQs)—two of the assessment formats most vulnerable to AI misuse.

Short-answer questions focused on factual recall (e.g., asking "What are the five pieces of marketing?")

Structured problem-solving (e.g., standard maths or science problems).

Analytical case studies where the prompt and context are clearly defined.

In fact, the authors of one recent academic study argue that traditional essays have become "outdated and inauthentic", given how easily an AI can write essays that pass as human—even when submitted to plagiarism detectors.

Institutions respond by reworking, not redesigning

Schools are responding by abandoning or reworking take-home essays and homework: Some educators report that take-home assignments are effectively obsolete; with AI widely available, giving writing assignments to complete outside class is now often seen as "inviting cheating".

As a result, many teachers are shifting toward in-class writing assignments, oral assessments, or supervised tasks—reverting to older, more controlled formats to try to ensure authenticity.

Teachers and institutions openly acknowledge the challenge: A 2025 study published in the Journal of Academic Ethics argues that generative AI undermines the credibility of traditional assessment and that technical countermeasures (e.g., digital proctoring) are insufficient on their own.

The growing consensus: assessment practices must be redesigned

Some scholars argue that the problem isn't simply cheating—it's that traditional assessment formats no longer meaningfully assess learning when AI is so accessible. They advocate redesigning assessments to emphasise higher-order thinking: analysis, problem-solving, creativity, application, oral exams, practical tasks, etc.

For example, a 2025 paper proposes an “AI-resilient assessment” framework: one that de-emphasises rote writing/recall and instead uses tasks that require human judgement, originality, and deep understanding.

3. The consequences: distrust, hollow graduates, and inequity

This institutional failure to adapt is producing three destructive consequences that directly reinforce the core argument about the broken compact between education and the economy.

A. Distrust, panic, and unreliable detection: the flawed policing model

As institutions panicked over mass student AI use, a new market for AI detection tools emerged. These tools—such as Turnitin, GPTZero, Copyleaks, and Winston AI—are machine learning models trained to estimate the probability that a text was generated by an LLM (Large Language Model) by analysing patterns like perplexity (the randomness of word choices) and burstiness (the variation in sentence structure).

Institutions, desperate for a "quick fix" to a complex pedagogical problem, often trusted the marketing of these tools, which advertised high accuracy rates (some claiming up to 99.98%) and positioned themselves as essential to "safeguarding academic integrity". This reliance established a flawed policing model over true educational reform.

However, research and institutional experience quickly exposed the unreliability of this approach:

High error rates (false flags): AI detectors are not fool proof; they provide probabilities, not proof. Studies show they frequently produce false positives, incorrectly flagging legitimate human writing—especially that of non-native English speakers or writing that has been subtly edited by the student.

Ease of evasion: Conversely, students employing simple "masking" or prompt-engineering techniques, or using AI paraphrasing/humaniser tools, can often submit AI-generated content that completely evades detection. A 2024 University of Reading study, for instance, found that AI-generated exam answers went undetected 94% of the time in a real-world test.

Institutional backlash against detectors: The global human cost of these flaws has led to high-profile institutional retreats:

- UCT scraps detectors: The University of Cape Town (UCT) announced in July 2025 that it would discontinue the use of AI detection tools (like Turnitin AI Score), citing concerns over their unreliability, which risked undermining student trust and fairness.

- False accusations and human cost: The Australian Catholic University (ACU) scandal demonstrated the danger, as the institution falsely accused many students based on unreliable detection, leading to months-long investigations and delays in graduation.

- UCT scraps detectors: The University of Cape Town (UCT) announced in July 2025 that it would discontinue the use of AI detection tools (like Turnitin AI Score), citing concerns over their unreliability, which risked undermining student trust and fairness.

This panicked reliance on flawed detectors transforms the learning environment into one of surveillance, where the fear of being falsely accused erodes trust. The panic forces students to "humanise" their writing (introducing intentional errors or unnatural phrasing) just to avoid detection—a process that actively undermines the actual goals of learning and risks alienating students.

B. Graduates hollow of real understanding

The problem is two-fold: students who pass with AI assistance and those who avoid detection entirely. Because AI can generate competent work that often evades detection, students can achieve high marks while suppressing critical thinking and independent problem-solving.

The competence gap: This failure risks producing cohorts of “credentialed” individuals who lack the genuine expertise or adaptive skills that professional environments now demand.

Market rejection: The consequence is a graduate workforce selling a commodity (rote knowledge) the market no longer values, directly contributing to the 46% drop in traditional graduate roles observed in the tech sector, as companies stop hiring humans to do work that software does for free.

C. Delays, unfair punishment, and inequity

The institutional failure results in tangible negative consequences for students:

Jeopardised progression: Students whose work is flagged by unreliable AI detectors may be forced to repeat assignments or modules—delaying graduation or progression, as happened in the ACU case.

Fairness concerns: Furthermore, the use of detection tools tends to disproportionately impact non-native language writers or students with different writing styles, raising significant equity and fairness concerns.

AI as the viable accelerator: re-engineering the continuum of competence

The fundamental challenge for higher education is no longer to prevent cheating but to re-engineer the learning process itself. Artificial intelligence is the only viable accelerator capable of moving students rapidly along the continuum of competence—shifting them from abstract student knowledge to demonstrable professional impact.

AI's value lies not in automation but in personalised leverage. It offloads the commoditised tasks of information recall and routine skill application, forcing the educational system to focus entirely on cultivating unique, non-routine human competencies like critical thinking, complex problem-solving, and professional synthesis.

1. The pedagogy of personalisation: customising the journey

AI-driven tools solve the scalability problem of personalised education, allowing every student to optimise their journey along the continuum toward mastery.

By personalising the learning pace and providing continuous, objective feedback, AI ensures that the foundational "root knowledge" is efficiently cemented, thereby accelerating the student's readiness to move to higher-order application.

2. Redesigning assessment for professional impact

AI forces institutions to abandon vulnerable, rote-based tasks and instead design AI-resilient assessments that mirror the complexity of the professional world. The focus shifts from measuring what a student knows to evaluating what a student can do when collaborating with an AI.

These redesigned assessments ensure that students are graded on their ability to command the entire human-AI synergy, which is the skill most demanded by the modern economy.

3. Fostering the new human competencies

The ultimate role of AI in education is to liberate teachers and students from routine cognitive tasks, allowing classroom time to be dedicated to cultivating the unique non-routine human competencies that determine professional success:

AI literacy and ethical use: Students are trained to use AI as a collaborator—mastering prompt engineering, critically evaluating AI output, and applying UNESCO-defined ethical frameworks to their work. This ensures they are AI-literate, a skill increasingly critical to employers.

Creative synthesis and judgement: By automating the initial draft or data/information summary, AI allows students to immediately jump to the highest-value work: synthesising disparate data, exercising human judgement over machine output, and generating original, creative solutions to complex problems.

The teacher as facilitator: With AI handling much of the rote teaching and grading, the teacher's role elevates to that of a mentor and coach. This shift allows educators to focus on developing students' interpersonal skills, emotional intelligence, and communication abilities—the uniquely human traits required for leadership and professional impact in the AI workplace.

Misinformation, bias, and critical evaluation: The necessity of human judgement is amplified by the inherent flaws of generative AI. AI can generate misinformation ("hallucinations") or present biased information as fact. Educators are rightly concerned about teaching with a tool that can hallucinate facts and perpetuate algorithmic biases. Students need to be taught to critically evaluate AI output, but many lack the foundational guidance and literacy to do so, leading to the risk of normalising inaccurate or skewed data. This places a massive burden on teachers to constantly fact-check and teach meta-literacy. Non-routine competency is therefore defined by the ability to verify, contextualise, and ethically challenge the output of powerful models.

Measuring non-routine competencies: Assessment must move away from easily automated final products and focus on the observable process and the defensible outcome. This means abandoning formats easily compromised by AI, such as take-home writing work, assessments with automatically answerable questions, multiple-choice tests, home-take exams, or portfolios (which are often unproctored or flawed proctoring tools used). Digital proctoring apps and AI detection tools are not a solution; they are unreliable, fear-driven countermeasures which are not solving the underlying pedagogical crisis. Instead, institutions must implement high-stakes, authentic assessment methods, AI-resilient methods that mirror real-world demands and make human thinking visible:

Defence of work and critical verification (the AI-integrated rubric): The focus shifts from the final product to evaluating the student's expert judgement and value-addition. Students must explicitly defend their process and critique the machine's initial output in oral examinations or presentations. Assessment rubrics must prioritise the following:

Data synthesis and fact-checking: Scoring the student's ability to synthesise additional, relevant information beyond the AI's core output, ensuring all facts are correctly referenced and verified to counter hallucination risks.

Creative and analytical depth: Evaluating the student's capacity for critical analysis and innovative problem-solving when refining machine-generated suggestions.

Prompt mastery: Requiring the submission of the prompt draft used, with scoring dedicated to the structure, keywords, and technical coding of the prompt itself, recognising prompt engineering as a core professional skill.

- Performance-based assessment (real-world competence): These assessments require students to apply knowledge in realistic, dynamic contexts, directly simulating professional life:

Simulated client interaction: Students are evaluated on their ability to manage complex, ambiguous challenges through role-playing with an AI model configured to act as a manager, client, or executive. This scenario demands immediate, on-the-spot application of communication skills and expertise to handle work-related enquiries or crises.

Learning loop: Following the practical scenario, the AI provides immediate, personalised feedback on where the student's performance could be improved, facilitating a continuous and engaging cycle of improvement and self-correction.

Professional demonstration (flexible delivery): Students must demonstrate their communication skills, professional polish, and research illustration through high-stakes presentations. The assessment focuses on the core competencies—delivery, content structure, non-verbal communication, and audience engagement—whether the work is delivered as a live, in-class presentation or as a recorded submission utilising professional digital tools (where applicable). The key metric is the student's ability to convey complex information effectively to a professional audience, demonstrating content mastery alongside the practical use of technology.

- Process and metacognitive assessment: To ensure genuine engagement, the entire workflow is graded through multi-stage assignments that demand reflection and iterative effort, including version history, reflection journals, and annotated drafts tracking the ethical application of AI.

Leveraging AI as an accelerator is not a compromise; it is the only viable strategy for fulfilling the broken compact, enabling higher education to transition students from mere knowledge retention to genuinely impactful professional competence.

How AI replaced some high-demand workplace areas

Entry-level jobs are no longer about doing routine tasks, which AI has commoditised. They are about managing, critiquing, and leveraging AI output to solve complex, ambiguous problems.

How students can secure and thrive in entry-level roles

To overcome the 46% drop in traditional graduate roles, students must prove they possess the non-routine competencies that cannot be automated. This requires shifting from a "knowledge seller" to a "competency demonstrator".

A. Entry-level skills to master (the new competence)

Prompt mastery & engineering: The ability to communicate with an AI model effectively, crafting detailed, iterative prompts that yield high-quality, relevant output. This should be explicitly listed on a resume.

Critical verification & fact-checking: A deep commitment to and demonstrable process for validating AI-generated facts and assumptions to guard against "hallucinations" and bias, turning AI output into a reliable starting point, not a final answer.

AI-integrated workflow management: Proficiency in using AI tools (not just ChatGPT, but industry-specific LLMs, co-pilots, and automation tools) as part of a structured, iterative work process, managing the version history and ethical application, as proposed in the Process and Metacognitive Assessment section.

Synthesis and judgment: The capacity to take disparate information (human and machine-generated) and synthesise it into an original, coherent, and defensible strategy—a skill only cultivated through high-stakes, authentic real-world tasks.

B. Proving capability (the professional portfolio)

Traditional resumes and transcripts, which showcase grades in rote-based assessments, are insufficient. Graduates need a professional portfolio that explicitly demonstrates the AI-resilient assessment strategies outlined in the text.

By redefining their skills and evidence around these non-routine competencies, students can transition from being graduates who are "hollow of real understanding" to indispensable professional partners who can command the human-AI synergy demanded by the modern economy.

Where to from here: the mandate for systemic re-engineering

The journey along the Continuum of Competence, accelerated by AI, demands a final, decisive stage: a complete, systemic re-engineering of higher education institutions (HEIs). The current state of "institutional failure" and the "toxic environment" of policing must give way to a strategic framework built on adaptation, policy, and investment.

The focus must immediately shift from controlling student behaviour to fundamentally redesigning the educational contract itself.

1. The institutional mandate: policy, governance, and training

The most urgent next step is to address the institutional gap by establishing clear, unified frameworks for AI adoption and ensuring continuous training across the board.

Establish a unified policy framework: HEIs must move beyond individual teacher discretion. Policies should be adaptive, transparent, and ethical, mandating disclosure of AI use in academic work while focusing on responsible and effective integration, not prohibition. This framework must cover data privacy [establishing protocols for protecting sensitive student data, especially when integrating vendor-supplied AI systems], ethical use [clearly defining appropriate and inappropriate use of AI and mandating protocols to address potential algorithmic bias and misinformation in AI outputs], and accessibility, ensuring AI does not widen the digital divide.

Establish a centralised AI Strategy Office (ASO): A dedicated, cross-disciplinary office should be tasked with integrating AI across all university functions—from administration (real-time insights, efficiency gains) to curriculum strategy and research ethics. This makes AI "too big to be ignored" and central to the institution's mission. The ASO's mandate is to guide policy, oversee implementation, and ensure alignment with core pedagogical goals. Critically, this office must be empowered to constructively challenge management and steer the transformation toward measurable educational impact, ensuring the institution is driven by strategic foresight rather than reactive hype.

Sustained teacher professional development: elevating the educator role

To counter the lack of teacher preparedness, training for faculty and staff must be comprehensive, ongoing, and designed to elevate the teacher's role from a knowledge dispenser to a facilitator and mentor.

Mandatory staff and faculty training: Institutions must invest in mandatory professional development. Training should focus on using AI as a pedagogical partner (e.g., for creating personalised feedback and adaptive learning systems) and on designing AI-resilient assessments rather than relying on flawed detection tools. Professional development must be prioritised to equip educators with the skills to effectively integrate AI into teaching and assessment.

Redesigning assessment training (the core focus): Training must teach educators how to design high-stakes, authentic, and AI-resilient tasks (e.g., oral defence assessments, complex scenario-based problems) that require human judgement, moving them away from easily automated formats like take-home essays.

Community of practice and sharing: Establish institution-wide, cross-disciplinary Communities of Practice (CoPs) where educators regularly share successful AI-resilient assignments, critique emerging AI tools, and collaboratively refine missing or unclear policies.

Time and resources: Institutions must allocate dedicated time, budget, and incentives for professional development. The time previously spent on routine grading (which AI can handle) should be explicitly reallocated to curriculum redesign and training.

2. Curriculum and pedagogy: the AI-resilient core

The core educational experience must be rebuilt to emphasise the new human competencies that determine professional impact.

Systematic curriculum overhaul: All courses must be reviewed to replace assignments based on rote knowledge with tasks that require higher-order thinking: analysis, synthesis, creation, and defence. This is the only way to make the degree credible again.

Embed AI literacy as a foundational skill: AI literacy—the ability to use, critique, and ethically leverage generative tools—must become a required, accredited competency. Students should be taught prompt mastery and critical verification techniques explicitly across disciplines.

Adopt process-orientated assessment: Fully commit to AI-integrated rubrics and performance-based assessment. This means:

Focusing on process: Requiring version histories, reflective journals, and annotated drafts to make human judgement visible.

Authentic scenarios: Implementing high-stakes, real-world simulations (like the Simulated Client Interaction) that require students to apply knowledge under pressure, demanding non-routine human competency.

Ongoing student training: mastering the AI workflow

Student training must be embedded directly into the curriculum, making AI literacy and ethical practice a core competency that is assessed and refined throughout their academic journey.

Mandatory AI ethics and literacy modules: Required modules should cover ethical principles, data privacy, and academic integrity upon entry, followed by discipline-specific, applied training on industry-relevant AI tools. Students must be taught to view AI as a collaborator, understanding its capabilities and, more importantly, its limitations (e.g., hallucinations and bias).

Skill refinement through iterative projects: Students should be required to submit iterative project logs and reflection journals throughout their studies, forcing them to practise process and metacognitive assessment and actively refine their AI-integrated workflow.

The AI "driver's licence": Consider a certified AI proficiency exam or "licence" that students must pass before graduation, demonstrating their ability to ethically and effectively deploy AI tools to solve complex problems and defend their output.

3. Investment and equity: accelerating all students

AI is an accelerator, but only if access and resources are managed equitably.

Prioritise infrastructure and resource allocation: Address resources and capacity. Constraints by investing in the necessary technology (e.g., adaptive learning platforms) and, crucially, in the human capital to support it. This investment ensures that under-resourced schools are not left behind, risking a divided landscape. The institutional goal should be to sustain a dynamic alignment model that is adaptive to ongoing technological change.

Leverage AI for personalised feedback: Fully deploy intelligent tutoring & feedback systems to dramatically shorten the learning loop. By offloading routine grading, faculty are liberated to focus on their role as mentor and coach, developing students' interpersonal and emotional intelligence—the uniquely human traits required for leadership.

Measure and market competence: Institutions must actively track student growth in non-routine skills (critical thinking, ethical judgement) using AI-powered learning analytics. The degree must be rebranded and accompanied by a portfolio that explicitly certifies the graduate's ability to achieve professional impact in an AI-integrated workplace, thereby repairing the broken compact with the modern economy.

In conclusion, the unguided and widespread use of Shadow AI by students and teachers creates a serious and immediate threat to the credibility of higher education, risking a future defined by hollow graduates, unfair academic penalties (e.g., delayed graduations due to unreliable detection), and increasing rates of unemployment where rote knowledge holds no value. This current state reflects a systemic institutional failure to adapt.

The path forward is not prohibition but systemic re-engineering where AI acts as the viable accelerator. This shift requires immediate action focused on cultivating non-routine human competencies (critical thinking, ethical judgement, and creative synthesis) via AI resilient assessments and continuous, mandatory training for both faculty and students. The future of education relies on strategically leveraging AI to certify not what a graduate knows but what they can do by commanding the human-AI synergy, thereby repairing the critical gap between academic credentialing and professional readiness.

Notes

Arredondo, P., Driscoll, S. and Schreiber, M. (2023). GPT-4 Passes the Bar Exam: What That Means for Artificial Intelligence Tools in the Legal Profession. Stanford Law School.

Bergin, J. (2025). University wrongly accuses students of students of using artificial intelligence to cheat. ABC News.

Clark, L. (2025). Tech industry grad hiring crashes 46% as bots do junior work. The Register.

Crisp, M., et al. (2025). Generative AI and the social functions of educational assessment. Assessment & Evaluation in Higher Education.

DemandSage (2025). 71 AI in Education Statistics 2025 – Global Trends.

Gent, E. (2025). An AI Council Just Aced the US Medical Licensing Exam. SingularityHub.

Horgan, R., Jarratt, S., & Prowse, N. (2024). A real-world test of artificial intelligence infiltration of a university examinations system: A “Turing Test” case study. PLOS ONE.

Mashinini, G. (2025). UCT scraps flawed AI detectors. UCT AI Initiative - University of Cape Town.

Smart Thinking (2025). Student generative AI survey 2025.

Ticong, L. (2025). 85% of Students Now Use AI, Calling It a '24/7 Tutor'. EWeek.

Williams, A. (2025). Integrating artificial intelligence into higher education assessment. Intersection: A Journal at the Intersection of Assessment and Learning, 6(1), 128-154.

Yeadon, W., et al. (2025). The Death of the Short-Form Physics Essay in the Coming AI Revolution. ArXiv.