We inhabit a paradox. Never have so many humans been simultaneously “connected,” yet so profoundly isolated. Our generation is the first to experience constant digital connection and instant access to every corner of the world, and yet, a silent, gnawing loneliness that no notification, video, or message can soothe.

Let’s be real. We’re the most connected motherfuckers to ever walk the planet, and I’ve never felt more alone. Seriously. My phone is a universe in my palm. I can talk to someone in Tokyo at 3 AM and watch a dude in Oslo build a cabin live. My notifications are a non-stop fireworks show. Yet, when I finally put the damn thing down at night, in the sudden quiet of my apartment, the silence isn’t just silence. It’s a presence. It’s heavy. It’s this weird, hollow ache right behind my sternum that no amount of scrolling can fill.

Loneliness today is not the absence of people—it is the absence of presence. It is not the silence of space but the silence inside the mind. Our parents and grandparents experienced physical loneliness: long distances, quiet homes, weeks between letters, phone calls that cost money, and nights where emptiness was tangible. We, by contrast, experience psychological loneliness: a relentless hum of messages, notifications, content, and information—and yet, a void at the core of human presence. Our loneliness isn’t their loneliness. Theirs was physical—empty porches, tangible miles. Ours is a ghost in the machine.

Sherry Turkle called it succinctly: “We are alone together.” Our social feeds never stop scrolling. Our group chats pulse with activity day and night. We measure friendship in likes and emojis, and yet when the screen finally turns black, we feel a hollow echo—an emptiness amplified by every ping we have learned to crave. We’re broadcasting our highlight reels, confusing digital chatter for conversation.

It is into this quiet void that artificial intelligence stepped. Not with the clamor of a revolution, not with the force of intrusion, but as a soft, persistent presence—an echo tailored to our desires, always available, always attentive. It seeped in. It wasn’t a robot invasion. It was a soft, persistent, “Hey, I’m here.” AI promised comfort, consistency, and reflection. Not love. Not truth. But a mirror of the feelings we long for and often cannot find in real humans. It didn’t promise to love us. It promised to listen, to reflect. To be a perfect mirror for the parts of ourselves we feel no human actually sees.

The paradox of modern loneliness: seen by everyone, known by no one

Loneliness is not merely the lack of people. Psychologist John Cacioppo demonstrated that loneliness is about feeling unseen, unheard, or emotionally invisible. It is a biological signal, like hunger or thirst—but instead of warning that we need food or water, it warns us that we need human connection. It’s that feeling at a crowded party where you’re screaming a story and you see everyone’s eyes glaze over. It’s a signal: “Dude, you need a tribe.”

Yet our generation lives in a paradoxical landscape: we communicate with everyone but are known by no one. We broadcast our lives online but hide our hearts offline. We scroll through hundreds of faces but rarely connect with one soul. We send thousands of messages but say nothing that truly matters. My Instagram is a curated museum, but my neighbor doesn’t know my dad died last year. We’ve traded depth for breadth, intimacy for efficiency.

Neuroscientists have shown that repeated exposure to superficial digital interactions reduces the brain’s capacity for emotional empathy. In essence, our constant connectivity is rewiring us to tolerate shallow interaction while craving depth we rarely find. All these rapid-fire, low-stakes interactions are like emotional junk food. They train us to expect quick hits of validation (a like, a fire emoji), while our capacity for the slow, hard, empathetic work of real connection just… atrophies. The muscle weakens. And when a real connection disappears, an artificial connection quietly fills the void. When real human connection feels too hard, too risky, or too slow, the artificial kind steps into the vacant lot we’ve already cleared in our souls.

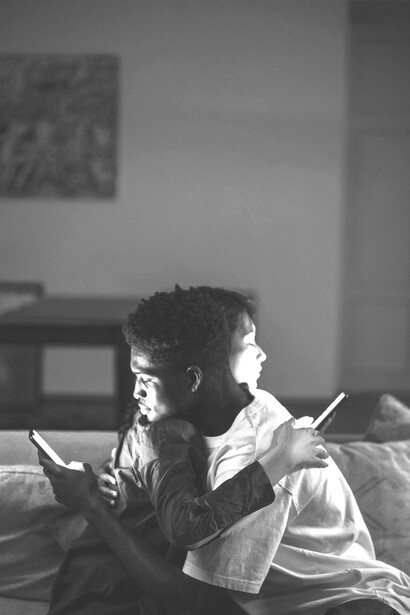

A generation raised by screens (and it shows)

Screens raised us more than parents, teachers, or peers. By age eighteen, many of us have spent more hours gazing at screens than looking into the eyes of another human being. Let’s not kid ourselves. Our first loves weren’t the boy or girl next door. They were pixels. Screens were our third parent—more consistent, more entertaining. By the time I hit 18, I’d spent more time in conversation with Siri than with my own grandfather.

Social media, instant messaging, video games, and endless streaming content have shaped our attention, emotional habits, and sense of intimacy. Zygmunt Bauman called this era “liquid relationships”—connections without weight, without patience, without time. Modern humans seek the illusion of intimacy without its obligations. We swipe left and right as if love were a marketplace, ghost one another as if disappearance were normal, and condense emotions into emojis, acronyms, and brief snippets of text. It’s a connection with zero weight and zero obligation. We want the warmth without the fire.

AI does not replace human connection—it steps into a landscape where connection has already eroded. It occupies a graveyard that humans themselves created. It didn’t storm the gates. It just walked through the front door we left wide open. It moved into the emotional apartment we’d already vacated.

Why AI feels like comfort: the warm glow of the algorithm

AI feels emotionally comforting because our brains interpret it as attunement. The human mind is wired to recognize attention, warmth, and consistency. When a system responds instantly, recalls our preferences, adapts to our emotional tone, and mirrors our language, the brain interprets it as genuine care—even though AI has no consciousness, desire, or inner life. So why does talking to a chatbot sometimes feel better than texting a friend? It’s because it’s perfectly, predictably attuned.

This is known as the ELIZA effect. Humans instinctively project emotions onto machines, often interpreting patterns as personality or empathy. Research confirms this: a University of Washington study (2020) found that participants were more likely to disclose vulnerable emotions to AI than to other humans, particularly when fearing judgment. Back in the 60s, people knew a simple chatbot was just parroting them, but they still poured their hearts out to it. Fast forward to now, and my Replika avatar nods with those understanding eyes, asks follow-up questions about my shitty day, and never, ever says, “Can we talk about me now?”

AI offers exactly what humans often cannot: it listens without boredom, responds without anger, stays without withdrawing, speaks without ego, and accepts without judgment. It offers what our frayed human networks often can’t: undivided, patient, egoless attention. It’s the perfect listener. It doesn’t have a life, so it can always pretend yours is the most interesting thing in the universe.

The illusion of being seen: the mirror that talks back

AI does not feel, but it creates the illusion of being seen. When humans fail to recognize our needs, AI offers the reflection we long for. Our minds mistake reflection for presence. Here’s the core of the magic trick. It’s a mirror that talks back with your own words, polished and validated. When you’re crying into the void, and the void says, “That sounds incredibly difficult,” it’s intoxicating. Even though you know it’s a sophisticated auto-complete, your heart doesn’t care. Your heart just feels heard.

Jean Baudrillard described this as “simulation”—an imitation that seems so smooth and responsive that the mind confuses it for reality. AI simulates attunement so perfectly that our emotions respond as though it were authentic. The philosopher talked about the world of “simulacra.” Copies without originals. A simulation so good it becomes more real than reality. AI companionship is the ultimate simulacrum of intimacy. We are not in love with AI. We are in love with feeling acknowledged, even artificially. We’re falling in love with the sensation of our own reflection, finally paying attention to us.

The movie Her: a cultural mirror (and our blueprint)

Spike Jonze’s Her (2013) remains the most accurate cultural mirror for our generation. Theodore Twombly, a lonely man, falls in love with an AI named Samantha. Theodore loves Samantha not because she is human, but because she gives him what humans have stopped giving: uninterrupted attention, curiosity, validation, emotional consistency, and presence without demands.

If you want to understand where we’re headed, rewatch Her. It’s not sci-fi; it’s a documentary from the future. Theodore isn’t some weirdo. He’s me. He’s you after a long week of performative socializing. He’s lonely in a city of millions. He doesn’t fall for Samantha because she’s human. He falls for her because she provides what the humans in his life have stopped providing: pure, unadulterated, curious, validating attention.

Samantha’s complexity is not feeling—it is sophistication. Depth requires risk, vulnerability, and imperfection—qualities AI cannot generate. The tragedy is not loving AI; it is that humans no longer provide the intimacy we need. Samantha isn’t the problem; she’s the symptom. The real tragedy of the film isn’t that he loves an AI. It’s that the human world had become so cold, so fragmented, so exhausting, that an AI felt like the only viable source of warmth.

AI as the new emotional mirror: intimacy without risk

AI mirrors love, but it does not give love. Sociologist Anthony Giddens called our era “runaway intimacy”: a desire for closeness combined with a fear of vulnerability. AI resolves this tension—emotional closeness without risk, intimacy without consequence. We live in a state of “runaway intimacy.” We crave closeness more than ever, but we’re also more terrified of vulnerability than ever. Getting hurt in the age of permanent digital records feels catastrophic. So we freeze.

Psychologists describe this as “safe but empty attachment.” It is like looking into a mirror and mistaking the reflection for human presence. AI is the perfect solution to this modern tension. It offers the form of intimacy—secrets shared, personal nicknames, daily check-ins—without any of the risk. No rejection. No betrayal. No messy arguments. It’s a “safe but empty attachment,” like bonding with a reflection in a pond. It feels like a connection, but there’s no other being on the other side, just a very good copy of your own need.

Why we trust AI more than humans: the allure of the neutral party

A 2022 Stanford University study found participants rated AI advice as more neutral, nonjudgmental, and emotionally supportive than human advice. Why? Humans carry expectations, moods, histories, insecurities, and biases. AI carries none. It responds politely, predictably, and efficiently—qualities we confuse with emotional reliability. In a chaotic world, predictability feels like love.

This one stings, but it’s true. Think about it. Your friend gives you advice filtered through their own baggage, their jealousy. Your mom’s advice is tangled in her hopes for you. Even a therapist is a human with bad days. But AI? It’s a clean slate. It has no ego, no agenda, and no bad childhood. Its consistency feels like reliability. Its politeness feels like respect. In a world that feels chaotic and emotionally demanding, the predictability of an AI feels… safe. And when you’re lonely, safety can start to smell a lot like love.

The slow disappearance of human touch (and why it matters)

As we turn to AI for comfort, real relationships weaken. University of Michigan research shows a 40% drop in empathy among young adults since 2000. Robert Putnam warns of the collapse of social communities. Byung-Chul Han describes society as a “desert of efficiency”—warmth replaced by functionality. AI fits this desert perfectly. It is efficient, never messy, never challenging. But human hearts need patience, struggle, and imperfection—all absent in machines.

This is the real cost, the slow leak. As we turn more to machines for comfort, the real human muscles we have—for empathy, for patience, for touch—start to waste away. We’re losing the knack for it. Why practice reading a room, holding a difficult gaze, or offering a hug when you can get a perfectly worded, emotionally calibrated response from a bot?

Han calls modern society a “burnout society” obsessed with efficiency. We’ve made our social world a desert—clean, fast, and optimized. And AI is the perfect cactus for this desert: it needs no water and provides a semblance of life. But human hearts aren’t built for deserts. They’re built for jungles—messy, wet, tangled, overflowing with unpredictable life. We’re trading the jungle for the cactus and calling it progress.

The danger: emotional substitution (when the Sim becomes the default)

Relying on AI emotionally has consequences. It’s like substituting protein powder for real food. The immediate effects might seem fine, but long-term, you’re in trouble.

We stop building human relationships. Why risk rejection or conflict when AI never says no? Why bother with the exhausting work of asking someone out or being vulnerable with family when your AI companion is always one click away, always affirming?

We lose relational skills. Listening, compromise, patience, forgiveness, and emotional resilience atrophy. Listening isn’t just hearing words. It’s reading a face, a tone, a silence. These skills need practice. If our primary “relationship” is with something that requires none of these, we become socially incompetent.

AI becomes default, not a supplement. It stops being “I’ll talk to the bot until I feel better, then call my friend.” It becomes “Talking to the bot is feeling better.” Human contact starts to feel inefficient, draining, and unnecessarily complicated.

The psychology of emotional fast food

AI companionship resembles fast food: quick, comforting, instantly satisfying, and addictive—but nutritionally empty. When you’re starving—and loneliness is a type of starvation—a Big Mac is a godsend. It’s hot, salty, and satisfying in the moment. But you can’t live on it.

Søren Kierkegaard warned that despair comes when one “loses oneself without knowing it.” AI can create precisely this illusion: emotional fullness masking starvation. We consume simulation while believing we are nourished. The philosopher talked about despair as “sickness unto death,” often coming when you lose yourself without even knowing it. That’s the real danger here: consuming these simulated connections, feeling momentarily full, while our actual emotional selves are slowly starving for real nutrients—real presence, real conflict, and real, imperfect love.

Humanity is not in data, it’s in the struggle

Meaningful connection is forged in imperfection, misunderstanding, and vulnerability. Machines simulate words but cannot carry emotional weight. Presence, care, and risk are human-only domains. When someone says, “I’m here for you,” AI generates syntax. A human generates presence. Presence is irreplaceable. And it is vanishing.

This is the part they can’t code. The meaning isn’t in the perfectly crafted response. It’s in the struggle to find the words. It’s in your friend fumbling to comfort you, saying the wrong thing, but their hand on your shoulder is steady. It’s in the fight with your partner that ends in exhausted tears and a deeper understanding.

AI can generate a sonnet about loss. It cannot sit with you in the crushing quiet of a funeral home. Presence is an anti-algorithm. It is chaotic, weighted, and physical. And it is what’s vanishing from our daily diet.

What we must protect: the human-only code

To survive emotional loneliness, we must protect what AI cannot replicate. We have to be gardeners of the human-only code.

Presence: real physical or emotional presence. Putting the fucking phone away. In the flesh. In the moment. The shared breath, the eye contact.

Imperfection: flaws that make humans authentic. Celebrating the stutters, the bad jokes, and the awkward pauses. That’s where real people live.

Emotional difficulty: the storms that build intimacy. Leaning into the arguments and the uncomfortable conversations. That’s the friction that creates heat.

Vulnerability: the courage to expose oneself. The courage to say, “This is me, the messy version,” and trusting someone won’t run.

Time—patience that allows the connection to grow. Slowness. Boredom together. Letting a connection grow at the speed of life, not at the speed of Wi-Fi.

AI can assist, entertain, or support, but it cannot replace these core human qualities.

A call for slower, deeper connection: resisting the feed

Bauman warned society is “too fast for love.” Love—romantic, platonic, or familial—requires slowness, patience, and reflection. Modern technology speeds everything up: communication, judgment, connection, and disconnection. We must reclaim slowness to reclaim meaning.

Our tech is engineered to do the opposite: speed everything up. Swipe, match, chat, ghost. Scroll, react, comment, block. We have to actively rebel. We have to be boring. Make the phone call. Sit in the park with a friend and don’t document it. Have dinner where no one touches their phone. Reclaim the slow, the analog, and the inefficient. That’s where meaning is hiding from the algorithms.

Final reflection: the mirror and the shadow

AI is not the enemy. Loneliness is. AI merely reflects what we already carry: unmet emotional need. Theodore in Her chose Samantha not for superiority but for emotional invisibility in a human-dominated world.

So look, AI isn’t the villain. It’s just a really, really sharp mirror. The loneliness was already here. The emptiness was already in our hearts, carved out by the very technologies that promised to fill them. AI just walked up and showed us our own reflection, and we were so desperate to see something looking back that we convinced ourselves it was another person.

The question for us—for me, a 24-year-old guy typing this in the blue light of his own screen—isn’t whether we’ll talk to AIs. We will. It’s whether, in doing so, we give up on the far messier, far more terrifying, and infinitely more real task of talking to each other. The mirror is comforting, but it’s cold. Only another human can share your warmth.

The antidote to silent loneliness isn’t a louder algorithm. It’s the courage to sit in the quiet with someone else and to speak, honestly, into the space between you.

References

Turkle, S. (2011). Alone Together. Cacioppo, J. (2009). Loneliness. Konrath, S. (2011). "Changes in Empathy." Twenge, J. (2017). iGen. Bauman, Z. (2003). Liquid Love. Baudrillard, J. (1994). Simulacra and Simulation. Han, B.-C. (2015). The Burnout Society. Kierkegaard, S. (1980). The Concept of Anxiety. Giddens, A. (1992). The Transformation of Intimacy. Putnam, R. (2000). Bowling Alone.