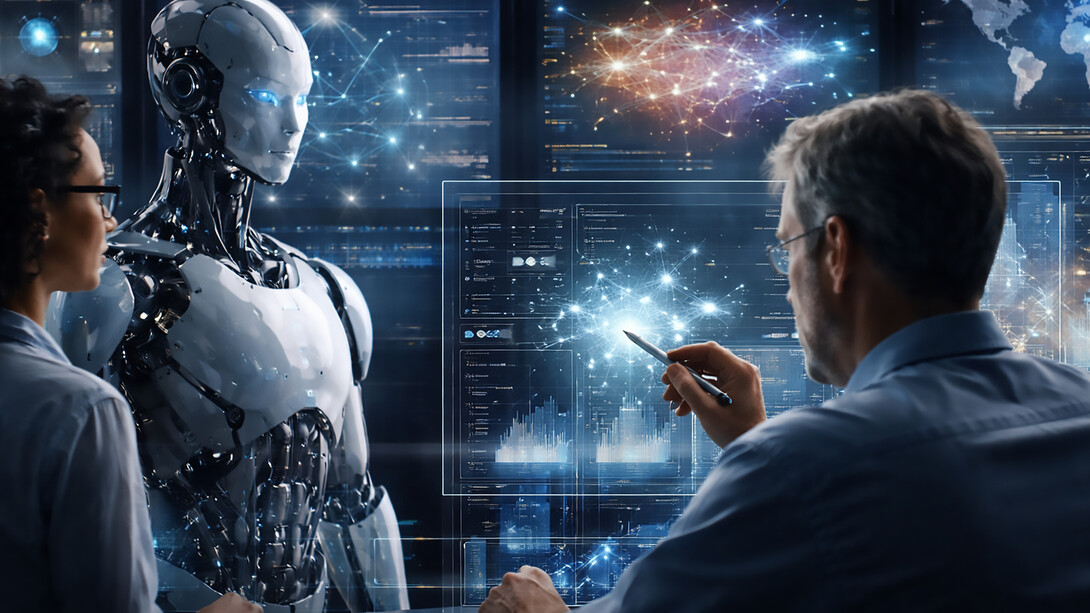

Artificial intelligence today performs tasks that once required expert intuition: diagnosing faults in satellites, allocating wireless spectrum, generating entire business plans, and even interpreting complex human emotions. We are witnessing an acceleration of capability unlike any previous technological epoch. Yet, paradoxically, the more powerful these systems become, the more urgent a simple truth appears: intelligence without humanity becomes directionless.

This is the essence of Human-in-the-Loop (HITL) AI—a model where human judgment, ethics, and contextual understanding remain embedded in every stage of an intelligent system’s lifecycle. In an era dominated by automation narratives, HITL is not a nostalgic attachment to the past; it is a design philosophy for a more stable and responsible future.

Why humans remain necessary in intelligent systems

Contrary to popular imagination, AI does not “understand” the world the way we do. Algorithms see correlations, patterns, and probabilities—but not meaning. They lack lived experience, emotional nuance, and moral intuition.

Even the most advanced AI models today struggle with three persistent limitations. Context sensitivity remains a challenge because AI excels when rules are stable, while human reality is full of exceptions—subtle cultural cues, ethical grey zones, and evolving risks that no dataset can fully encode. Rare events also pose a problem: AI learns from historical data, but in critical systems such as space missions, healthcare, and finance, the most dangerous events are precisely those with little precedent. Finally, value interpretation remains unresolved. AI can optimize metrics, but it cannot define values. Whether an outcome is ethical rather than merely optimal is not a mathematical question—it is a human one. Human-in-the-loop frameworks recognize this gap not as a flaw, but as a natural division of labor: machines compute, and humans interpret.

What human-in-the-loop really means

The phrase often gets misused. Human-in-the-loop is not a human pressing a “confirm” button after an AI recommendation. It is a continuum of collaborative intelligence spanning multiple layers.

At the data level, people label, curate, and correct datasets, particularly for sensitive tasks such as satellite imagery classification, medical diagnostics, or sentiment interpretation. Without this human grounding, AI inevitably learns the wrong lessons. At the model level, experts interact with AI during its learning process, adjusting parameters, guiding exploration, and defining what “good performance” means. Models do not improve by accident; they improve by negotiation. At the decision level, humans remain the final authority, with AI functioning as an advisor rather than a decision-maker. This is especially crucial in safety-critical environments such as aviation, autonomous vehicles, orbital maneuvers, and emergency response systems. Finally, at the governance level—often overlooked—humans define policies, ethical boundaries, escalation paths, transparency requirements, and acceptable risk thresholds. A loop, by definition, repeats, and in HITL systems, feedback is continuous rather than terminal.

Where AI excels—and where humans must intervene

Modern AI is exceptional at processing scale, handling millions of data points, thousands of variables, and instantaneous inference. However, it is incapable of discernment.

AI performs best in situations involving real-time anomaly detection, pattern discovery across large datasets, predictive modeling under stable conditions, repetitive or labor-intensive analysis, and rapid simulation across multiple scenarios. Humans must lead when ethical trade-offs are involved, when environments are uncertain, when low-probability but high-impact events occur, when conflict resolution involves human stakeholders, or when decisions carry political, social, or cultural consequences. Synthetic intelligence is powerful, but human wisdom remains irreplaceable.

A more human purpose for AI

In industries ranging from aerospace to education, there is growing pressure to automate everything. Yet full automation—particularly of judgment—is neither realistic nor desirable. The real question is not whether AI should replace humans, but how it should amplify human capability.

Human-in-the-loop models contribute in three essential ways. They increase trust, because people are more likely to rely on systems they can understand and influence; opaque decision-making justified by algorithmic authority is not a viable societal foundation. They ensure accountability, because when outcomes affect public safety or human dignity, responsibility cannot be outsourced to an algorithm. Finally, they preserve adaptability: human systems evolve, while AI models remain static until retrained, and human oversight ensures resilience amid political, regulatory, and operational change.

The space and telecommunications perspective

In space systems—an area rapidly transformed by satellite constellations, onboard autonomy, and automated mission planning—human-in-the-loop has particular significance. Orbital conditions change unpredictably, space weather events can disrupt even the most robust predictions, and frequency interference issues involve regulatory and geopolitical dimensions. Deep-space missions also raise ethical questions concerning scientific priority and risk tolerance. Fully autonomous systems may be faster, but they are rarely wiser.

Human-in-the-loop oversight becomes essential in areas such as onboard fault protection logic, constellation coordination, Earth observation anomaly interpretation, deep-space trajectory correction approval, planetary protection decisions, and spectrum conflict arbitration. Many successful missions reflect a careful symbiosis between algorithmic precision and human judgment.

The hidden risk: over-trusting automation

As AI grows more sophisticated, a paradox emerges: users tend to trust it more even as they understand it less. This can lead to over-reliance on predictive models in ambiguous situations, reduced scrutiny of automated decisions, invisible propagation of bias, and automation complacency that erodes human skill. Human-in-the-loop mitigates these risks only when implemented rigorously. A human signature on an AI-generated decision is meaningless unless the review is informed, contextual, and empowered.

Designing responsible HITL systems

A functional human-in-the-loop system requires deliberate architecture. Clear intervention points must exist so humans know when and why they are expected to review or override decisions. AI behavior must be transparent and explainable, as evaluation is impossible without understanding. Human reviewers must possess genuine domain expertise rather than symbolic authority, particularly in fields such as aviation safety, telecommunications regulation, mission planning, and healthcare. Feedback from human reasoning must flow back into AI systems rather than remaining a passive check. Increasingly, HITL is also a regulatory requirement, reflected in frameworks such as the EU AI Act, emerging space safety standards, and medical AI governance.

From automation to augmentation

The most significant shift is conceptual. The purpose of AI has never been to remove humans from decision-making but to allow them to focus on what machines cannot replicate: creative reasoning, ethical reflection, strategic judgment, empathy, and foresight. Human-in-the-loop is not a constraint; it is an opportunity to design systems that elevate human capability rather than replace it.

A more responsible future of intelligence

AI is becoming embedded in global infrastructure, shaping decisions that ripple across societies, ecosystems, and even Earth’s orbit. As these systems advance, the presence of a thoughtful, trained, and accountable human becomes a stabilizing force rather than a limitation. Machine intelligence may be fast and vast, but human intelligence remains the compass. Human-in-the-loop ensures that technological trajectories remain aligned with empathy, context, and purpose—qualities that no algorithm can fully synthesize and perhaps never should.