We know that AI is bringing great changes to today’s world. On the one hand, it is bringing growth and new opportunities, but on the other hand, it is also negatively affecting people. A few examples include fewer jobs, fake videos and photos, and false information.

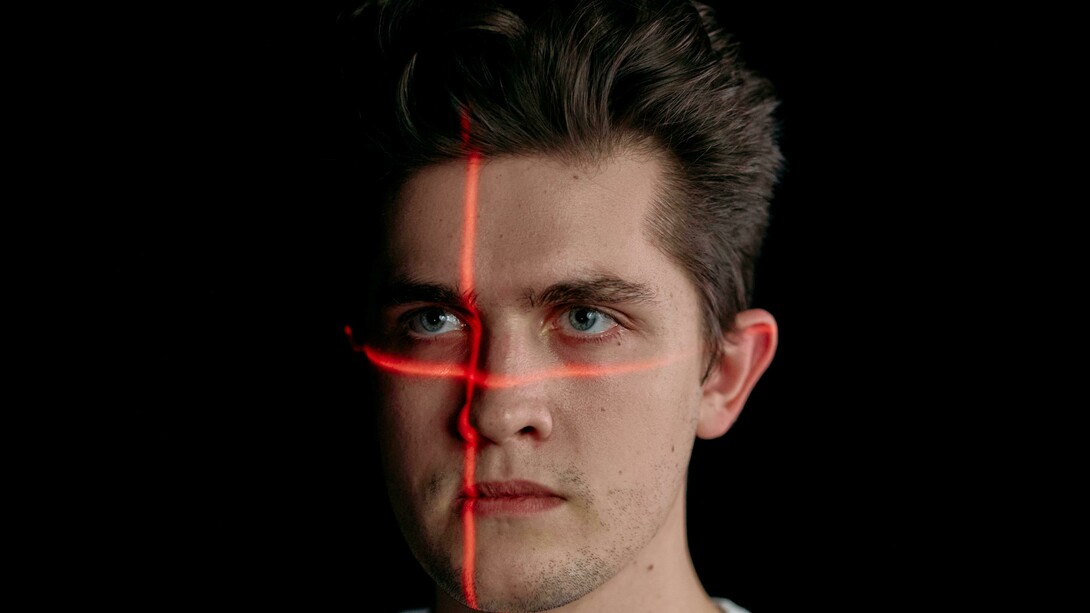

In the hyperconnected world, it is becoming increasingly challenging to distinguish what is real from what is not. People may believe some random, surprising video only to find out later that it was made with AI. The rise of such deepfakes, hyperrealistic videos, is doing more harm than good, blurring the line between truth and deception. From fake celebrity clips to manipulated political speeches, deepfakes are reshaping how we perceive reality and trust digital content.

What are deepfakes?

Before heading for the good and bad sides of deepfakes, let’s dig deeply into what deepfakes really are. “Deepfakes” is a term that comes from “deep learning” and “fake.” They utilize AI algorithms, such as the Generative Adversarial Network (GAN), to create realistic fake media.

Deepfakes are not only limited to creating a person’s lookalike but are also capable of cloning a person’s voice. Moreover, the AI-generated photos with realistic backgrounds are also popular.

How are they made?

Initially, deepfakes required some knowledge and technical know-how to create such fake videos and photos. However, with the readily available tools and software, it has become much easier to generate photos of any scene featuring any person. It is even possible to create them with the applications on your phone. The process of creating a deepfake usually involves:

Collecting hundreds of images or videos of a target person.

Training a neural network to map their facial patterns and movements.

Superimposing or blending that model onto another video or image.

A comparison of the positive and negative sides of deepfakes

As artificial intelligence grows more powerful, the line between real and fake blurs further every day. On one hand, it is bringing positive aspects to the world and completing certain tasks quickly, but on the other hand, it is also negatively impacting the daily lives of many people.

The dark side of deepfakes

While deepfake technology can be used for entertainment purposes, it also opens the door to serious ethical, religious, social, and political risks.

Deepfakes can be used to spread false information involving personalities from politics and more. This can cause serious harm to the diplomatic relations among countries. Many victims, often women, have found their faces in non-consensual explicit videos. This creates tons of problems for them, and they are unable to prove anything because the videos and photos seem so real.

Scammers are using AI-generated voices to impersonate CEOs or family members, tricking people into transferring money. They are also using this technology to get sensitive information from them.

Perhaps the most dangerous consequence is what experts call the “liar’s dividend.” When anything can be faked, everyone can claim that inconvenient truths are fake. This severely erodes the public trust, and nobody believes anything now.

The bright side of deepfakes with ethical and creative uses

Deepfake technology is not intrinsically harmful. Despite the risks involved, its impact is contingent upon its application. And that is the case with most of the technology.

For example, in film and entertainment, deepfakes save production time and money by resurrecting historical characters or de-aging performers. In education, lessons can be translated into numerous languages, increasing access to education globally.

In healthcare, deepfake-based simulations support patients with speech difficulties, enhance face reconstruction, and train physicians.

To put it another way, if used properly, the same instruments that can be used to deceive can also be used to teach, amuse, and heal.

How can we detect and combat deepfakes?

Although most of the videos and photos created out of deepfakes seem to be real, there are still some inconsistencies in them. There are certain points, such as unnatural eye movements, lighting mismatches, or audio-visual misalignment, by which one can determine that the video is fake.

Apart from that, there can be certain regulations implemented to avoid the spread of false information. Blockchain verification and digital watermarking are some of the steps that can be taken to ensure the authenticity of such photos and videos.

As users, it is also our responsibility to make sure the information being shared is correct. We, as digital citizens, must learn to question what we see. Before believing or sharing a shocking video, we must verify the source.

Final words

Advancements in technology are bringing both the goods and the bads. It depends on its users and on how they use it. Deepfakes represent both the brilliance and the danger of artificial intelligence. But some people are more inclined towards misuse and spreading false information rather than using it for a valuable purpose. In the end, it is all about preserving the truth and transparency that can avoid false information.